Prefer a video? Watch Matt present a wider version of this topic at YOW! Perth in 2022.

It's a Multi-Protocol World

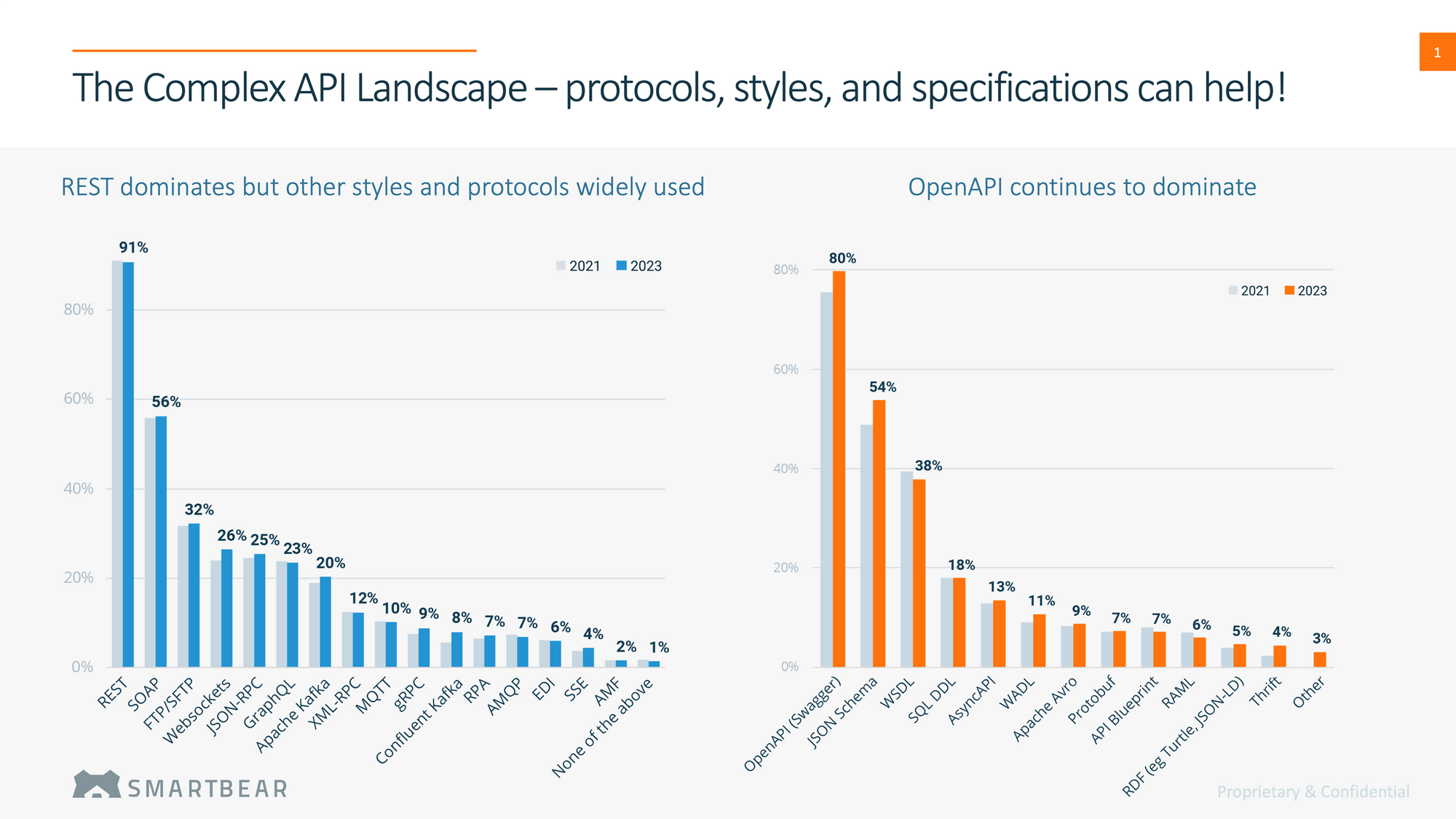

In SmartBear's State of Software Quality API (2023) report, 9% of respondents reported using gRPC in their organisations, with 7% also reporting the use of Protobuf. This follows a trend we've seen over multiple editions now, where a majority of organisations operate within a multi-protocol environment (81%), and 57% have to manage 3 or more protocols.

In this article, we look into gRPC and Protobuf, and discuss their benefits, the types of breaking changes they are susceptible to, and finally and how Pact and PactFlow can be used to support gRPC contract testing to improve safety and reliability.

What is gRPC?

From https://grpc.io/:

gRPC is a modern open source high performance Remote Procedure Call (RPC) framework that can run in any environment. It can efficiently connect services in and across data centers with pluggable support for load balancing, tracing, health checking and authentication. It is also applicable in last mile of distributed computing to connect devices, mobile applications and browsers to backend services.

In our experience, gRPC is often used as a replacement for internal service communications over the popular JSON/HTTP – or REST paradigm – where domain concepts (resources) are represented and transferred over HTTP, and HTTP verbs used as operations to act on them.

Remote Procedure Calls (or RPCs for short) by contrast, and as the name implies, are more concerned about procedures - doing things. They can be made to be entity-centric if desired; however, they need not be. For this reason, it can be simpler to implement as it doesn't require you to think about mapping your requirements onto resources and operations in the way REST does. Many RPC frameworks are also quite opinionated, further reducing the decision making and enabling rich tooling that can, for example, autogenerate client SDKs or produce documentation.

Whilst RPC style communication has existed for eons, gRPC has a particular focus and has found root in systems that require:

- Performance

- Scale

- Polyglot language support

- Multiple communication styles such as bi-directional streaming, fire-and-forget messages, and server push

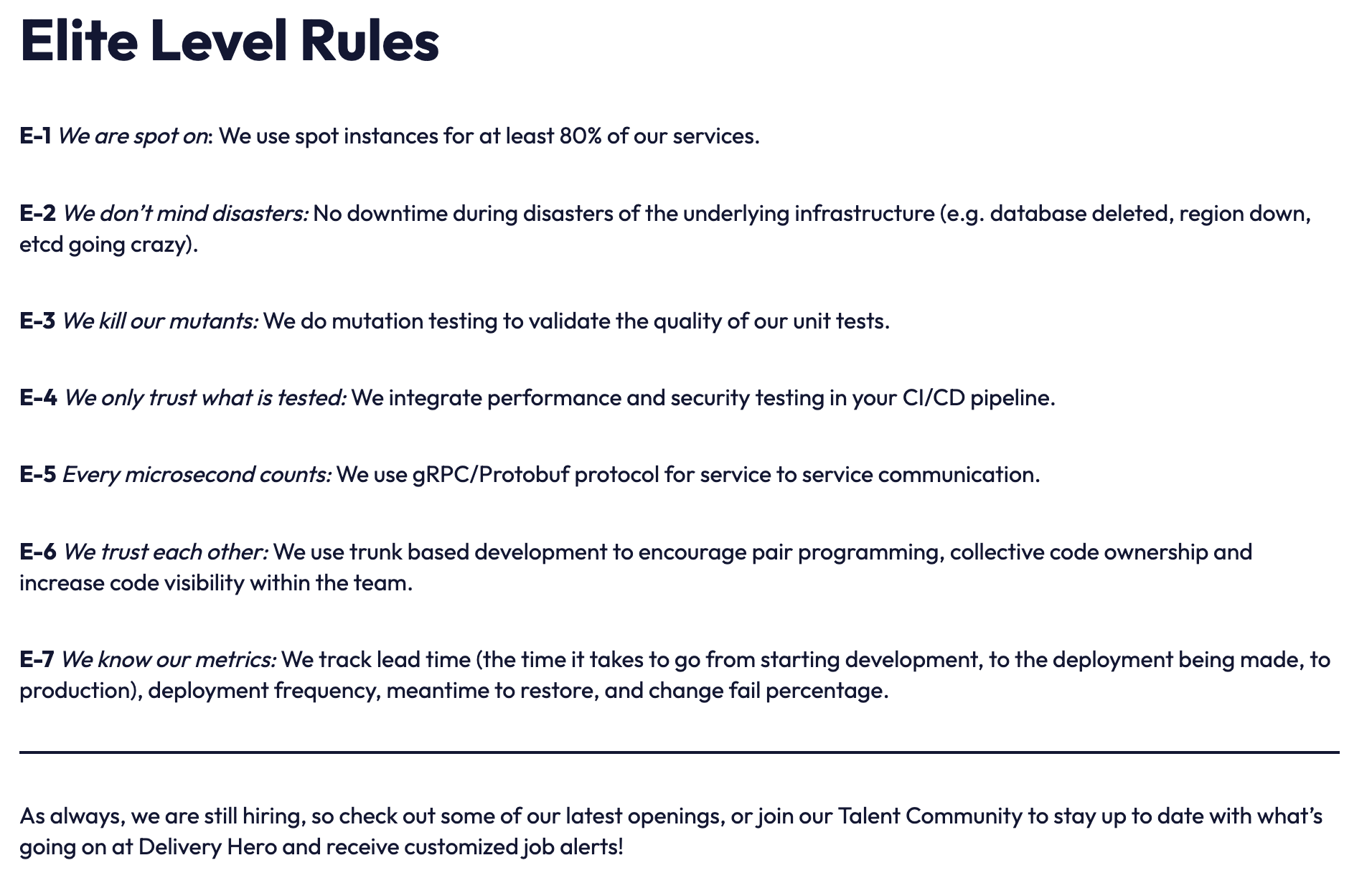

Companies like Delivery Hero, Square, Netflix, Slack, Medium, and Afterpay all have made decisions to use gRPC on the basis of performance. Here is a rule (E-5) from the Delivery Hero Reliability Manifesto, that clearly shows why they've bet on gRPC:

Fun fact: the 'g' in gRPC stands for something different every release.

What is Protobuf?

If gRPC is an alternative to the REST paradigm, Protocol Buffers – or Protobuf for short – replaces the most common REST payload type: JSON.

Protocol buffers provide a language-neutral, platform-neutral, extensible mechanism for serializing structured data in a forward-compatible and backward-compatible way. It’s like JSON, except it's smaller and faster, and it generates native language bindings.

Its benefits include:

- Being smaller and faster than many other serialisation formats

- Built-in forward- and backward-compatibility (schema evolution)

- Code generation tooling to create server/client SDKs

It's common for gRPC and Protobuf to be paired together, but it's important to note that they need not be used together. gRPC can work with other payload types, and Protobuf can be used with other transports and communication frameworks.

In fact, it's quite common for Protobuf to be used outside of gRPC as it turns out to be a good fit for streaming solutions such as Kafka, high-volume messaging systems, data pipelines and even as the payload for more performant HTTP systems.

The Case For Contract Testing gRPC and Protobuf

We've written about the case for contract testing gRPC and Protobuf before, but to recap, the following are ways we can break a contract with our consumers:

- Protocol-level breaking changes

- Handling changes to message semantics

- Coordinating changes (forward-compatibility) and dependency management

- Providing transport layer safety (less of an issue when Protobuf is paired with gRPC)

- Ensuring narrow type safety (strict encodings)

- Loss of visibility into real-world client usage

- Optionals and defaults: a race to incomprehensible APIs

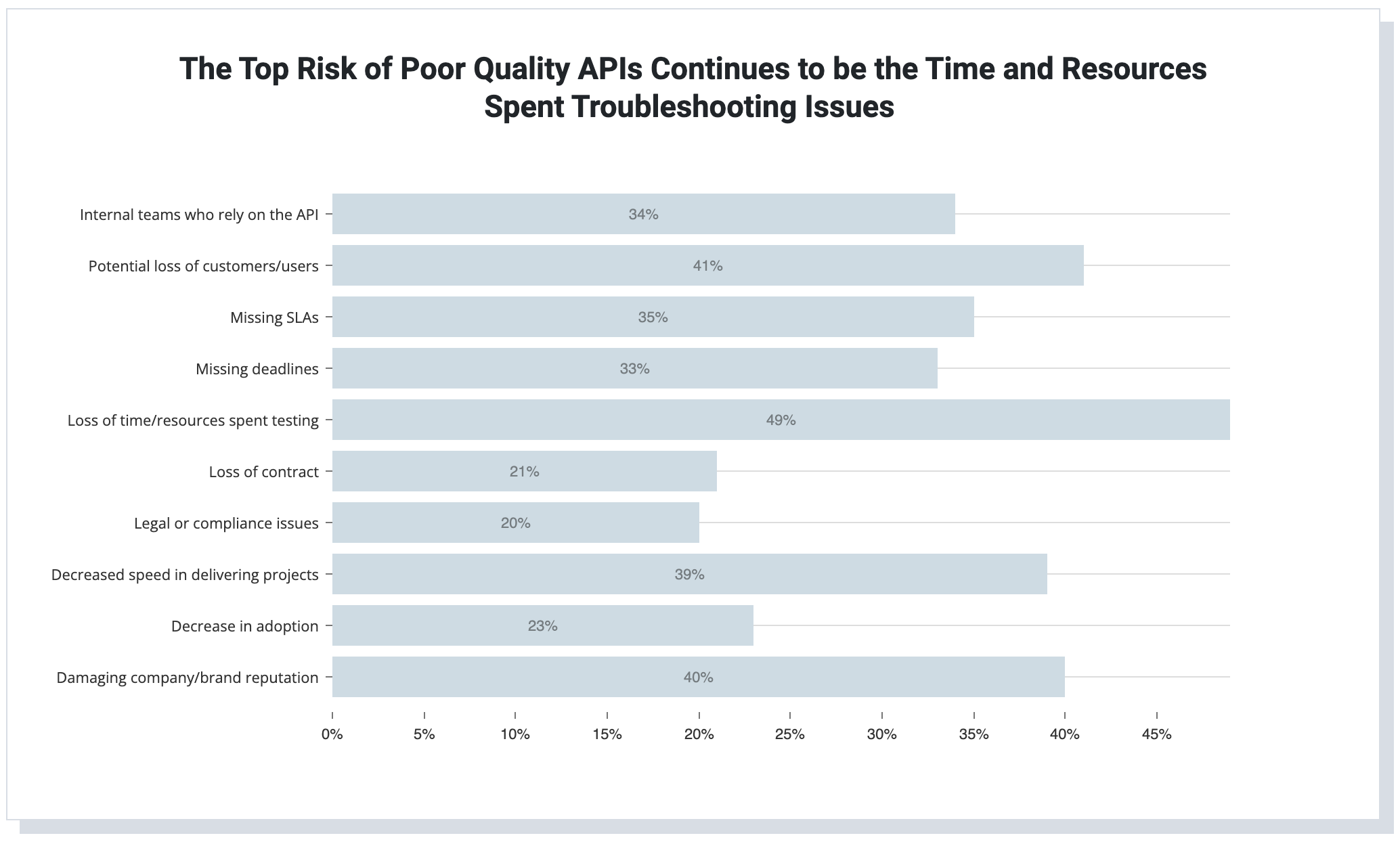

Returning to our survey, then, it's not surprising that 21% of respondents identified their top risk of poor-quality APIs being "loss of contract" and 34% were concerned about impacting other internal teams that rely on their APIs.

So how can we address some of these problems?

Introducing Contract Testing for gRPC

gRPC contract testing is made possible with the Pact Plugin Framework. Plugins enable you to extend the capabilities of Pact in order to test novel transports or content types or, in the case of gRPC/Protobuf - both!

The steps to contract testing with gRPC and Protobuf are the same as with any regular Pact test, with the only difference that we need to install a plugin to validate the new transport and content types:

- Install the gRPC/protobuf plugin (Update: More recent versions of your tooling may automatically discover and download this for you)

- Write a consumer test

- Publish the contract

- Verify your provider

Let's run through these in turn through a simple Java example: the Area Calculator.

gRPC Contract Testing Example: The Area Calculator

The Area Calculator gRPC example can receive a shape via gRPC call, and return its area. It uses Protobuf as the payload, so that we can try out contract testing with both at once. See the proto file for the full definition of the service.

The proto file has a single service method calculateOne, which these examples will be testing, and a few different-shaped requests/responses:

service Calculator {

// RPC method we are testing

rpc calculateOne (ShapeMessage) returns (AreaResponse) {}

}

// A polymorphic, Protobuf data type

message ShapeMessage {

oneof shape {

Square square = 1;

Rectangle rectangle = 2;

Circle circle = 3;

Triangle triangle = 4;

Parallelogram parallelogram = 5;

}

}

// One of the polymorphic shapes we can calculate the area for

message Square {

float edge_length = 1;

}

...

// Response body

message AreaResponse {

repeated float value = 1;

}The full project can be downloaded here.

Step 1: Install the Protobuf Plugin

Once you have installed the Pact CLI tools, you can install the plugin by its registered name (protobuf), and it will be automatically discovered and installed into the relevant location on your machine:

pact-plugin-cli -y install protobufStep 2: gRPC Consumer Contract Test

Now that you have the plugin installed, you can write a test for your gRPC client. Just like any other Pact test, we must set up the mock with our expectations (calculateRectangleArea with the @Pact annotation), and then test our gRPC client and ensure it worked as expected (calculateRectangleArea with the @PactTestFor annotation).

The commented JUnit test below should look familiar to experienced Pact users with some subtle differences to accommodate the use of plugins:

/**

* Main test class for the AreaCalculator calculate service method call.

*/

@ExtendWith(PactConsumerTestExt.class)

@PactTestFor(providerName = "area-calculator-provider", providerType = ProviderType.SYNCH_MESSAGE, pactVersion = PactSpecVersion.V4)

public class PactConsumerTest {

/**

* Configures the Pact interaction for the test. This will load the Protobuf plugin, which will provide all the

* Protobuf and gRPC support to the Pact framework.

*/

@Pact(consumer = "grpc-consumer-jvm")

V4Pact calculateRectangleArea(PactBuilder builder) {

return builder

// Tell Pact we need the Protobuf plugin

.usingPlugin("protobuf")

// We will use a V4 synchronous message interaction for the test

.expectsToReceive("calculate rectangle area request", "core/interaction/synchronous-message")

// We need to pass all the details for the interaction over to the plugin

.with(Map.of(

// Configure the proto file, the content type and the service we expect to invoke

"pact:proto", filePath("../proto/area_calculator.proto"),

"pact:content-type", "application/grpc",

"pact:proto-service", "Calculator/calculateOne",

// Details on the request message (ShapeMessage) we will send

"request", Map.of(

"rectangle", Map.of(

"length", "matching(number, 3)",

"width", "matching(number, 4)"

)),

// Details on the response message we expect to get back (AreaResponse)

"response", List.of(

Map.of(

"value", "matching(number, 12)"

)

)

))

.toPact();

}

/**

* Main test method. This method will receive a gRPC mock server and example request message, which we will use the

* generated stub classes to send to the mock server. The mock server will return the AreaResponse message configured

* from the values in the setup method above.

*/

@Test

@PactTestFor(pactMethod = "calculateRectangleArea")

@MockServerConfig(implementation = MockServerImplementation.Plugin, registryEntry = "protobuf/transport/grpc")

void calculateRectangleArea(MockServer mockServer, V4Interaction.SynchronousMessages interaction) throws InvalidProtocolBufferException {

ManagedChannel channel = ManagedChannelBuilder.forTarget("127.0.0.1:" + mockServer.getPort())

.usePlaintext()

.build();

CalculatorGrpc.CalculatorBlockingStub stub = newBlockingStub(channel);

// Correct request

AreaCalculator.ShapeMessage shapeMessage = AreaCalculator.ShapeMessage.parseFrom(interaction.getRequest().getContents().getValue());

AreaCalculator.AreaResponse response = stub.calculateOne(shapeMessage);

assertThat(response.getValue(0), equalTo(12.0F));

}

}This should produce a pact file in the project's build directory, if successful.

Step 3: Publish gRPC Contract

Publishing your contract is the same as any other Pact test, and we always recommend using our CLI for this job:

pact-broker publish

--consumer-app-version 1.0.0

../consumer-jvm/build/pacts/protobuf-consumer-area-calculator-provider.jsonNOTE: You will need to ensure the relevant PACT_BROKER_* credentials are present in your terminal.

Publishing your contract to PactFlow, you can see the integration and contract details, as you would with any other:

Step 4: gRPC Provider Contract Test

Now that we have a contract that captures the consumer's needs, we need to ensure the provider satisfies them.

Depending on the language and toolchains you use and environment you're testing against, you might be able to run this natively in those tools (such as from within JUnit, Gradle, or Maven in JVM ecosystems), or you may choose to execute the tests externally using our CLI. In this example, we'll use the CLI as it can be used to test any language, framework or system so long as it's accessible to the verifier.

You first will need to start your gRPC service locally (preferred), or have it deployed to a test environment for testing (less preferable). Once the service is running, you can simply run the verifier, passing the details of the contract you wish to validate and the port to communicate to your running gRPC service:

gRPC/area_calculator/provider-jvm:

❯ pact_verifier_cli -f ../consumer-jvm/build/pacts/protobuf-consumer-area-calculator-provider.json -p 37757 -l none

2022-05-02T04:29:36.636972Z INFO main pact_verifier: Pact file requires plugins, will load those now

2022-05-02T04:29:36.638556Z WARN tokio-runtime-worker pact_plugin_driver::metrics:

Please note:

We are tracking this plugin load anonymously to gather important usage statistics.

To disable tracking, set the 'pact_do_not_track' environment variable to 'true'.

2022-05-02T04:29:36.873435Z INFO main pact_verifier: Running provider verification for 'calculate rectangle area request'

Verifying a pact between protobuf-consumer and area-calculator-provider

calculate rectangle area request

Test Name: io.pact.example.grpc.consumer.PactConsumerTest.calculateRectangleArea(MockServer, SynchronousMessages)

Given a Calculator/calculate request

with an input .area_calculator.ShapeMessage message

will return an output .area_calculator.AreaResponse message [OK]👏 And there we have it. We have confirmed that our gRPC client is able to call the calculator with a Rectangle shape, and that it can receive, parse and understand the AreaResponse message.

If the provider later decided to remove the Square from the list of supported shapes, this would be a safe operation to do. If it decided to accept string-encoded numbers (for example, to encode higher-precision values), this would cause a failure as the types are incorrect.

gRPC Contract Testing Guarantees

By using contract testing, we were able to address 6 out of the 7 problems that gRPC and Protobuf introduce:

- ✅ Protocol-level breaking changes – Relevant protocol mismatches will be caught during the contract test, because the plugin will not be able to either parse or verify the expected consumer types.

- ✅ Handling changes to message semantics – The contract test captures the representative examples and resolves ambiguous possibilities.

- ✅ Coordinating changes (forward-compatibility) and dependency management – Through the use of the Pact Broker and versioning, Pact can allow teams to ship safely into production with the comfort that they are compatible with their consumers.

- ✅ Providing transport layer safety (less of an issue for gRPC) – We haven't shown this here, but if Protobuf was used over HTTP, it would gain the same level of confidence as other Pact HTTP tests, which would be covered.

- ✅ Ensuring narrow type-safety (strict encodings) – With matchers and expressions, we can narrow the allowed sets of values during validation.

- ✅ Loss of visibility into real-world client usage – With Pact, we get fidelity to the field type of each consumer's needs.

- ❓ Optionals and defaults: a race to incomprehensible APIs – The "specification by example" approach Pact has improves the comprehensibility, but is currently not capable of fully resolving the dilemma.

Summary

gRPC and Protobuf are a powerful combination, allowing organisations to create large-scale, high-volume and high-performance communication systems. However, the technology opens up several possibilities for introducing breaking changes that could spell disaster for teams operating those platforms. With the introduction of the Pact Plugin Framework and the gRPC/Protobuf Plugin, Pact enables teams to apply contract testing to prevent such problems and safely evolve their system.

So, what are you waiting for? Get started with your 👉 gRPC contract testing journey, or watch the video below 👇 to see the example in this post in action.